File Systems

October 29, 2016

Categorised in: Computer Forensic & Cyber Applications

The simplest Windows file systems to understand are the FAT (file allocation table)

file systems: FAT12, FAT16, and FAT32.

Although relatively old, FAT file systems are still used on many storage systems such as removable storage media in digital cameras and mobile devices.

Given their widespread use and simple structure, FAT file systems are a good starting point for forensic analysts to understand file systems and recovery of deleted data.

It is also important to understand the fundamentals of NTFS, which is more complex than FAT and has substantially different structures.

FAT

A FAT formatted volume uses directories and a file allocation table to organize files and folders. The root folder (e.g., C:\) is at a pre-specified location on the volume so that the operating system knows where to find it .

This folder contains a list of files and subdirectories on a floppy diskette with their associated properties as shown in Figure 17.2 through X-Ways Forensics.

This view of the folder shows the starting cluster and date-time stamps associated with each file.Notably, FAT file systems do not record the last accessed time, but only the last accessed date.

Listing the contents of a volume using the dir command displays some of this information but does not show the starting cluster—a critical component from the file system perspective.

In addition to indicating where the file begins, the starting cluster directs the operating system to the appropriate entry in the FAT.

The FAT can be thought of as a list with one entry for each cluster in a volume.

Each entry in the FAT indicates what the associated cluster is being used for.

The following output from Norton Disk Editor shows a file allocation table from the same floppy diskette.

Clusters containing a zero are those free for allocation (e.g., when a file is deleted, the corresponding entry in the FAT is set to zero).

If a FAT entry is greater than zero, this is the number of the next cluster for a given file or folder.

For instance, the root folder indicates that file “skyways-getafix.doc” begins at cluster 184.

The associated FAT entry for cluster 184, shown in bold, indicates that the file is continued in cluster 185.

The FAT entry for cluster 185 indicates that the file is continued in cluster 186, and so on (like links in a

chain) until the end-of-file (EOF) marker in cluster 225 is reached.

In this example, Cluster 226 relates to a different file (“todo.txt”) that occupies only one cluster and therefore does not need to reference any other clusters and simply contains an EOF.

Subdirectories are just a special type of file containing information such as names, attributes, dates, times, sizes, and the first cluster of each file on the system.

For instance, before the folder named “april” on the floppy diskette was deleted and overwritten, it occupied cluster 157 and contained the following:

When an individual instructs a computer to open a file in a subfolder (e.g., “C:\april\handbright.doc”), the operating system goes to the root folder, determines which cluster contains the desired subfolder (cluster 157 for “april”), and uses the folder information in that cluster to determine the starting cluster of the desired file (cluster 79 for “handbright.doc”).

The folder also contains long file names and the cluster associated with the entries is not the actual starting cluster (e.g., 7602176 for handbright.doc).

If the file is larger than one cluster, the operating system refers to FAT for the next cluster for this file.

The entire file is read by repeating this “chaining” process until an EOF marker is reached.

FAT12 uses 12-bit fields for each entry in the FAT and is mainly used on floppy diskettes.

FAT16 uses 16-bit fields to identify a particular cluster in the FAT and there must be fewer than 65,525 clusters on a FAT16 volume.

This is why larger clusters are needed on larger volumes—a 1-GB volume can be fully utilized with 65,525 16-kB clusters (32 sectors per cluster), whereas a 2-GB volume requires clusters that are twice as big: that is, 65,525 32-kB clusters (64 sectors per cluster).

FAT32 was created to deal with larger hard drives by using 28-bit fields in the FAT (4 bits of the 32-bit fields are “reserved”).

FAT32 also makes better use of space, by using smaller cluster sizes than FAT16—this can be a disadvantage for investigators because it can reduce the amount of slack space.

NTFS

NTFS is significantly different from FAT, storing file system information in several system files including a Master File Table (named $MFT), supporting larger disks more efficiently (resulting in less slack space), and providing file and folder level security using Access Control Lists (ACLs), and more.

NTFS is designed with disaster recovery in mind, storing a copy of the $BOOT system file at both the beginning and end of the volume.

In addition, a copy of the first four records in the $MFT file is stored in another system file named $MFTMIRR located in the middle of the volume.

These copies of information can be useful from a forensic perspective when attempting to recover files.

The $MFT contains a list of records, each 1024 bytes in length, that store most of the information needed to locate data on the disk.

Each entry in the $MFT represents a file or folder, and stores associated attributes including $STANDARD_INFORMATION and $DATA as shown in Figure 17.3 using the SleuthKit.

The $STANDARD_INFORMATION attribute stores the created, last modified, and last accessed dates and times.

The $DATA attribute either contains the actual file contents of small files (called resident files) or the location on disk of large files (non-resident files).

Directories are treated much like any other file in NTFS but are called index entries and store folder entries in a B-Tree to accelerate access and facilitate resorting when entries are deleted.

Instead of using ASCII to represent data such as file and folder names, NTFS uses an encoding scheme called Unicode.

This difference must be taken into account when performing text searches.

NTFS has a more formal file initialization process than FAT file systems, but the same issues can arise, such as storage space reserved for a new file not being used in its entirety, or at all, which can be misinterpreted as backdating.

When only a portion of the disk space that was reserved for a new file is used to store data associated with that file, this leaves a discrepancy between the logical file size and the actual amount of data stored in the file.

As a result, you can have a file that appears to have a logical size larger than the actual amount of data stored for that file.

The space between the end of valid data and the end of file is called uninitialized space.

In NTFS, there are two important concepts of file length: the End of File (EOF) marker and the Valid Data Length (VDL).

The EOF indicates the actual length of the file. The VDL identifies the length of valid data on disk.

Any reads between VDL and EOF automatically return 0 in order to preserve the C2 object reuse requirement (Microsoft fsutil documentation).

Uninitialized space is similar in concept to file slack except that it is contained within the logical file size.

Unlike file slack that is no longer associated with a file, data in uninitialized space are in a kind of limbo, trapped at the end of an allocated file but not actually a part of that file as depicted in Figure 17.4.

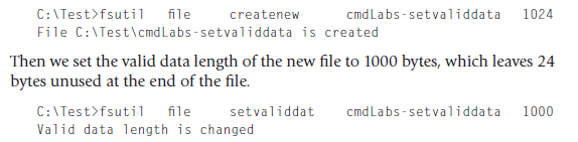

The effects of file initialization behaviors are most easily demonstrated on Windows XP with fsutil as shown here. First, we create a new file that can contain 1024 bytes:

NTFS captures the difference between logical file size and valid data length in two MFT fields as shown in Figure 17.5.

The significance of this from a forensic analysis standpoint is that a file with a valid data length smaller than the logical file size can contain data associated with two

files: data associated with the new file (VDL bytes), and data from the old file in uninitialized space (logical file size—VDL bytes).

From a forensic analysis perspective, this uninitialized space can be beneficial. While various disk cleaning utilities can be configured to wipe file slack, they generally do not touch data in uninitialized space.

As a result, deleted data can remain in uninitialized space indefinitely, even despite data destruction efforts, and can be salvaged by forensic analysts.

NTFS creates MFT entries as they are needed and, when a file is deleted, NTFS simply marks the associated MFT entry as deleted and available for a new file.

It is possible to recover all of the information about a deleted file from the MFT entry, including the data for resident files and the location of data on disk for non-resident files.

Recovering deleted files in NTFS can be complicated by the fact that unused entries in the MFT are reused before new ones are created.

Therefore, when a file is deleted, the next file that is created may overwrite the MFT entry for the deleted file.

However, if many files are created and then deleted, causing the MFT to grow, those entries will remain indefinitely as new files will reuse earlier entries in the MFT.

Another feature of NTFS that makes it more difficult to recover a deleted file is that it keeps folder entries sorted by name.

When a file is deleted, a resorting process occurs that may overwrite the deleted folder entry with entries lower down in the folder, breaking a crucial link between the file name and the data on disk.

NTFS is a journaling file system, retaining a record of file system operations that can be used to repair any damage caused by a system crash.

There are currently no tools available for interpreting the journal file (called “$Logfile”) on NTFS to determine what changes were made.

This is a potential rich source of information from a forensic standpoint that will certainly be exploited in the future.

DATES AND TIMES

When investigating computer-related crime, digital evidence examiners need a understanding of how Date and time values are stored and converted.

FILE SYSTEM TRACES

An individual’s actions on a computer leave many traces that digital investigators can use to glean what occurred on the system.

For instance, when a file is downloaded from the Internet, the date-time stamps of this file represent when the file was placed on the computer.

If this file is subsequently accessed, moved, or modified, the date-time stamps may be altered to reflect these actions.

Pratik Kataria is currently learning Springboot and Hibernate.

Technologies known and worked on: C/C++, Java, Python, JavaScript, HTML, CSS, WordPress, Angular, Ionic, MongoDB, SQL and Android.

Softwares known and worked on: Adobe Photoshop, Adobe Illustrator and Adobe After Effects.